IT is what IT is.

Discover leading Managed IT Service Providers across USA, Canada & the United Kingdom.

- 100s of leading MSPs

- Find a MSP near you

- Latest IT news for SMBs

Building Your First AI Model Inference Engine in Rust

In 2025, performance, safety, and scalability are no longer optional—they're mission critical. Rust is rapidly emerging as the go-to systems language for building fast, reliable AI infrastructure. In this guide, we’ll walk you through how to build your first AI model inference engine in Rust using cutting-edge libraries like tract, onnxruntime, and burn. Whether you're deploying lightweight models at the edge or scaling inference APIs in production, this tutorial shows how Rust gives you memory safety, zero-cost abstractions, and blazing-fast execution—without the Python overhead.

In 2025, performance, safety, and scalability are no longer optional—they're mission critical. Rust is rapidly emerging as the go-to systems language for building fast, reliable AI infrastructure. In this guide, we’ll walk you through how to build your first AI model inference engine in Rust using cutting-edge libraries like tract, onnxruntime, and burn. Whether you're deploying lightweight models at the edge or scaling inference APIs in production, this tutorial shows how Rust gives you memory safety, zero-cost abstractions, and blazing-fast execution—without the Python overhead.

Read full post on nerdssupport.comMSPdb™ News

Inside IT Careers in New Orleans: Your Business Lifeline

Everyone claims there’s plenty of tech talent in New Orleans, but have you ever seen what happens when a server fails at a healthcare clinic during pe...

Everyone claims there’s plenty of tech talent in New Orleans, but have you ever seen what happens when a server fails at a healthcare clinic during pe...

Read full post on infotech.us

Premier Networx Launches Innovation Lab Scholars Program to Expand Access to STEM Education in the CSRA

At Premier Networx, we believe access to technology education can create life-changing opportunities. As technology continues to shape the future...

At Premier Networx, we believe access to technology education can create life-changing opportunities. As technology continues to shape the future...

Read full post on premworx.com

Proven On-site Tech Support Services in Houston – 4 Reasons COBAIT Is the Smart Choice

Let’s be honest, when your business technology goes down, everything stops. And every minute of downtime is money out of your pocket. That’s exactly why finding the right on-site tech support services in Houston matters more than most business owners realize. COBAIT is a trusted name in business IT to support Texas, and they’ve built a reputation around showing fast and fixing things. It doesn’t matter if you’re running a small law firm near Galleria or managing a growing healthcare practice

Let’s be honest, when your business technology goes down, everything stops. And every minute of downtime is money out of your pocket. That’s exactly why finding the right on-site tech support services in Houston matters more than most business owners realize. COBAIT is a trusted name in business IT to support Texas, and they’ve built a reputation around showing fast and fixing things. It doesn’t matter if you’re running a small law firm near Galleria or managing a growing healthcare practice

Read full post on cobait.com

Top Cyber Risks Facing Law Firms Today

Law firms are prime targets for cyber criminals because they hold financial records, personally identifiable information, and confidential legal strategy. The top cyber risks today are phishing, credential theft, and ransomware, and any one of them can halt operations within hours of a breach. The Top Cyber Risks Law Firms Face Law firms are no

Law firms are prime targets for cyber criminals because they hold financial records, personally identifiable information, and confidential legal strategy. The top cyber risks today are phishing, credential theft, and ransomware, and any one of them can halt operations within hours of a breach. The Top Cyber Risks Law Firms Face Law firms are no

Read full post on mdltechnology.com

Enterprise Video Management Systems (VMS): What You Need to Know

Running multiple, uncoordinated video management systems across sites might feel manageable at first, but it quickly turns into a serious operational risk. What starts with antiquated technology like CCTV, layered with newer NVRs and digital video recorders, evolves into a fragmented environment. One where systems don’t communicate, visibility is limited, and security teams are left

Running multiple, uncoordinated video management systems across sites might feel manageable at first, but it quickly turns into a serious operational risk. What starts with antiquated technology like CCTV, layered with newer NVRs and digital video recorders, evolves into a fragmented environment. One where systems don’t communicate, visibility is limited, and security teams are left

Read full post on primesecured.com

What is Microsoft Power Platform? Fast-track your business Solutions.

Discover how Microsoft Power Platform accelerates business solutions. Learn to innovate faster with low-code tools for apps, automation, and analytics.

Discover how Microsoft Power Platform accelerates business solutions. Learn to innovate faster with low-code tools for apps, automation, and analytics.

Read full post on trndigital.com

Navigating IT Careers in Portland: From Helpdesk to Cybersecurity and Beyond

Colleague, forget the old idea that IT in Portland means fixing desktop printers and waiting for your next ticket. When a retail chain’s POS server fa...

Colleague, forget the old idea that IT in Portland means fixing desktop printers and waiting for your next ticket. When a retail chain’s POS server fa...

Read full post on computersmadeeasy.com

How Non-Profit IT Support Solutions Enhance Organizational Sustainability

In an era where sustainability is paramount, non-profit organizations, particularly those serving Midwest communities, face increasing pressure to do more with limited resources. Mission impact now depends as much on technology resilience as it does on passion and purpose. Purpose-built IT services for nonprofits play a critical role in strengthening organizational sustainability by streamlining operations,

In an era where sustainability is paramount, non-profit organizations, particularly those serving Midwest communities, face increasing pressure to do more with limited resources. Mission impact now depends as much on technology resilience as it does on passion and purpose. Purpose-built IT services for nonprofits play a critical role in strengthening organizational sustainability by streamlining operations,

Read full post on mis.tech

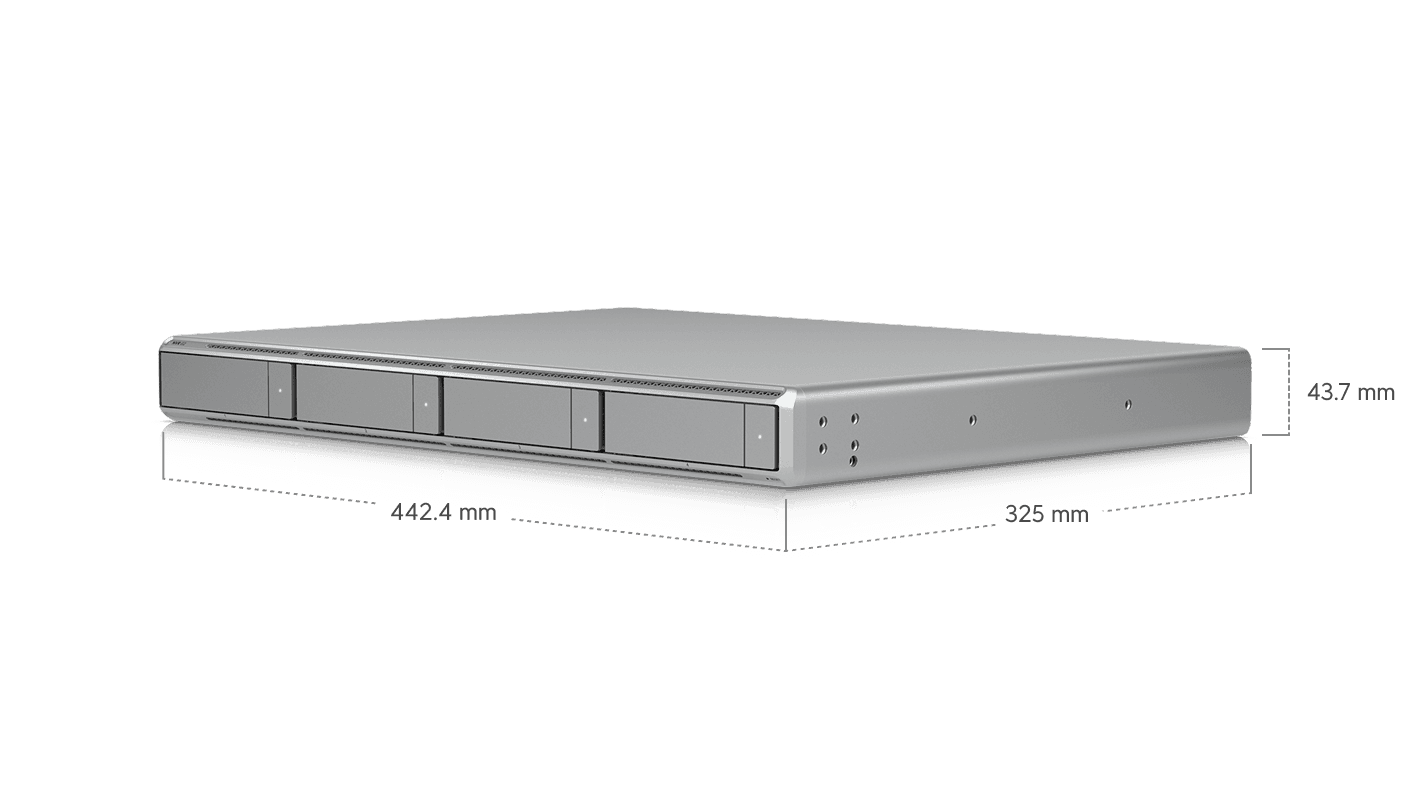

Should Your Business Upgrade to UniFi’s New UNVR G2?

Six years is a long time in tech. We deployed our first UniFi UNVR back in mid-2020, and it has been chugging along ever since, quietly recording footage and never

Six years is a long time in tech. We deployed our first UniFi UNVR back in mid-2020, and it has been chugging along ever since, quietly recording footage and never

Read full post on dpctechnology.com

10 Must-Have Managed IT Capabilities for Healthcare

Mid-market healthcare organizations are operating under conditions their managed IT providers were not built for a decade ago. The average healthcare data breach now costs more than $7 million per incident—the highest of any industry for the fourteenth consecutive year—and a mid-size hospital can lose more than $45,000 per hour during a disruption. At the ... Read more

Mid-market healthcare organizations are operating under conditions their managed IT providers were not built for a decade ago. The average healthcare data breach now costs more than $7 million per incident—the highest of any industry for the fourteenth consecutive year—and a mid-size hospital can lose more than $45,000 per hour during a disruption. At the ... Read more

Read full post on meriplex.com